Preventing agent drift: A guide to shipping serious code via vibe-coding

Agents need rules, structures, and frameworks to help them build big and we aren't using enough of them.

Thank you Steve for the excellent article AI is forcing us to write good code. It helped shape several of the ideas in this write-up.

1. What is the problem?

Agentic coding has witnessed monumental improvements in the past few months. I find myself using Cursor, Claude Code, and Codex way more than I anticipated - and the stats reveal that over 95% of my committed code is written by agents. Most of the code I write is in Java and this article would be solely focussed on Java enterprise standard code.

AI slop has been a huge problem for me - not just while writing code myself but also in reviewing others' code. I find that agents are great in building 0 to 1 products but quickly get out of hand as the product matures or while using them in existing large enterprise repos.

This "getting out of hand" is defined as "agentic drift" - a term that signals derailing of agents. A minor mistake in the code or a hacky design pattern quickly moves the agent to write code in a way that becomes unmaintainable. The way I would accurately describe this is - all agentic code has a proportion of "well-written" code and "poorly-written" (poorly written here does not mean functionally incorrect but is code that you would find putrid - overengineered and tasteless) code, just like how a human would.

Every flaw in the code quickly builds on top of the other if/when you truly "vibe" your way to code completion. This fares poorly if you expect your code to last for a while. Newer features and improvements get difficult to build and managing agents increasingly becomes chaotic. Human-in-the-loop / manual reviewing works well : after every prompt and agent code update, manually making corrections and cleanups help steer future agent updates in the right direction. I find myself hating this cognitive overload and our goal now would be to mitigate this problem.

2. What is the impact? - Why does this problem need to be solved?

Vibe-coding is definitely here to stay. To have this higher level of abstraction work for long-lived code, it becomes imperative to keep the ground solid while at the same time allowing the agent to spit out features rapidly.

We need a set of guardrails and harnesses to steer agents in the right direction.

I will not be talking about Skills and Rules and other prompt-based helpers - they definitely help bring about a structure to agentic coding but we require something better. The Java community has spent decades in building infrastructure for deterministic rule frameworks and that's exactly what we require to steer agents in the right direction - providing the foundation on which to build big. The goal is to have agents vibe code and "express" themselves with rapid iterations while deterministic rule frameworks help maintain their balance.

3. How do we solve the problem?

All we need to do is to throw all kinds of deterministic frameworks and rules upon the agents. The more of these we throw, the better our agent is going to be steered for long-term vibe coding success.

Types & Compile safety

Java already has a default structure baked into it via strong types and compile time safety. Any error that the agent makes with respect to syntax is quickly caught and fed back as a feedback loop. The agent self corrects and this constitutes a level-1 foundation which makes Java and frameworks such as Spring a great choice for vibe-coding. Simple errors are caught in this compile-phase itself.

Unit tests

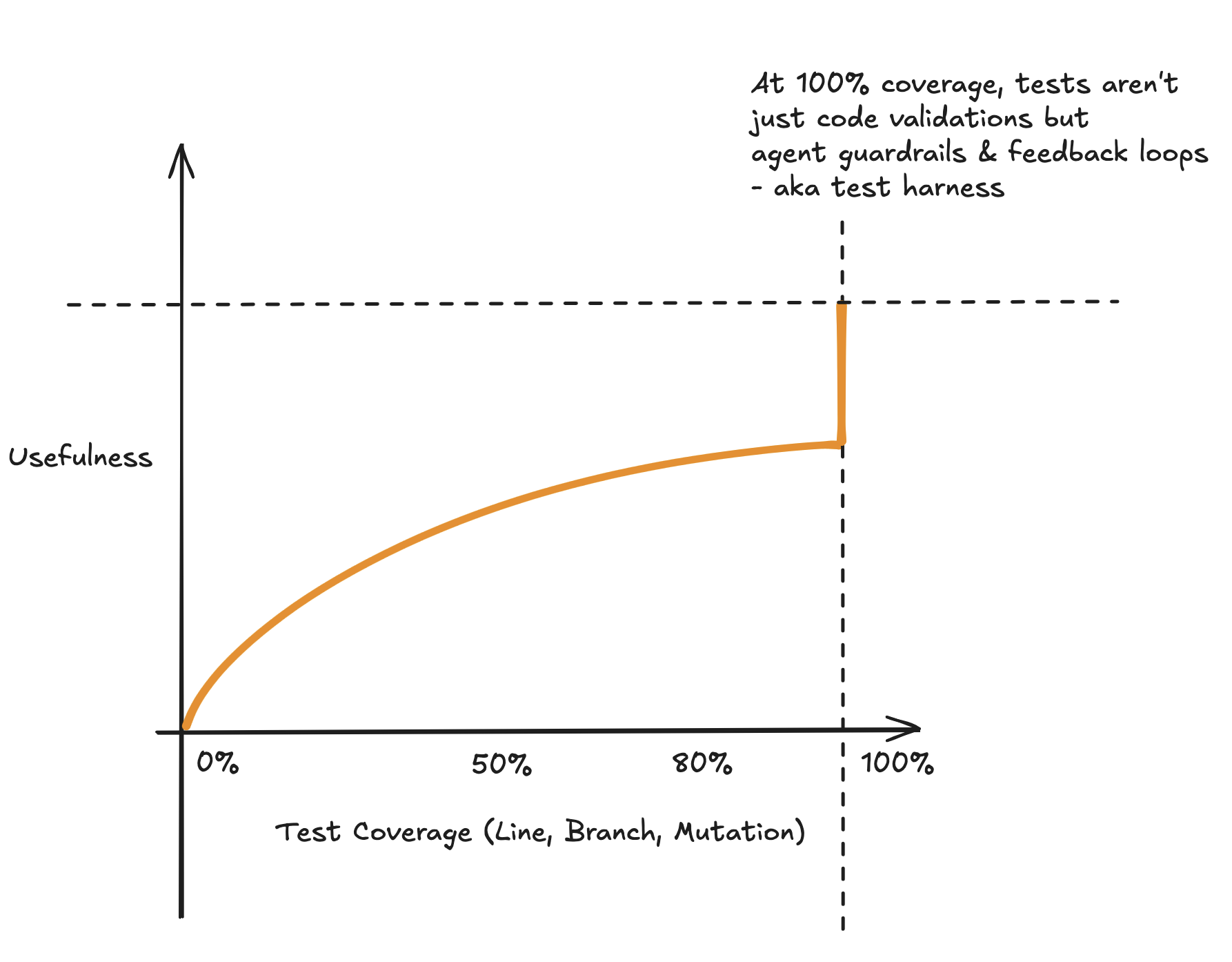

Unit Tests in the pre-agentic era were already important enough but its role has become central to agentic coding in ways it wasn't before. Unit tests in the days of yore were either written via TDD or after code was implemented - in either way it was a means to test your code and prevent future bugs. A JaCoCo coverage of 80% being the defacto enterprise standard of a highly covered codebase.

This has changed in the agentic world. Forget TDD - Unit Tests and Code both are written by agents much quicker and with higher quality than a human (Claude 4 thinking is fantastic at writing tests - better than a senior developer)

If both application code and tests are written by the agent - how does the role of a unit test change? Here's where the insight of 100% test coverage kicks in. With human written tests, going beyond 80% coverage was not worth the effort. From an ROI perspective, moving to 90% from 80% did not offer benefits as compared to the work put in writing them. But this changes when unit tests are agent written - the effort taken to write them is minimal and moving to 100% line and branch coverage is child's play. So is the effort and the tokens spent on it worth it now?

Turns out, it's worth much more than we expected. Moving to 100% line and

branch coverage served as a code lock-in. The entire code behaviour

snapshot was stored in the form of unit tests. This offered a few benefits

that were not realized earlier :

1. With 100% coverage, it meant that any new uncovered code change

would instantly fail the coverage threshold. This would enforce tests for

all (newly) agent-written code.

2. All leaks are plugged - minimal chances of functional errors. Any disruption

in functional logic is immediately caught and the error feedback is sent to

the agent: the agent self-corrects. The "lock-in" of code ensures that minimal

bugs creep in with any test failure serving as feedback.

3. The existing tests ensure that design patterns are strictly followed - method

signatures and class interfaces are adhered to. Any new code has to break this

structure for it to pass through and this isn't possible unless the agent decides

to go for a refactor. Having a less than perfect line and branch coverage creates

micro tears in the system through which sub-optimal code can pass through.

Achieving a perfect coverage score across all classes is as simple as leveraging existing test reporting tools such as JaCoCo and passing on the reports back to the agent to self-improve.

Mutation tests

Line and branch coverage alone isn't enough. Agents occasionally fool their way into achieving perfect coverage. For example : Asserting non-null on json objects when the expectation is to ensure all fields within the json are asserted. Here's where mutation testing shines. For years mutation tests were underutilized, primarily due to difficulty in understanding and running them. But agents are bringing them back.

Mutation tests ensure that false positives are removed by spawning mutants in the business logic. Ref : Pitest is the go-to tool to run mutation tests in Java. Pitest analyzes false test positives by spawning a variety of mutants and spitting an actionable report. This report can be used as feedback to the agent which self-corrects itself. A perfect mutation coverage ensures that all your assertions are indeed meaningful.

A combination of perfect line, branch, mutation coverage is all that you need for a solid test harness upon which your agent can start building upon.

SonarQube

SonarQube is yet another tool that acts as a support-structure to vibe-coding. It offers benefits that tests do not. Tests ensure functional correctness and adherance to contracts. Sonar's static analyses ensures that the code the agent writes is written well following best practices. It catches short-term hacks and code smells - again the output report feeding back to the agent to fix itself. Having a sonarqube report continuously feeding to the coding agent ensures that the foundation of the code is as solid as it can be. A strong foundation helps build a taller building.

OpenRewrite

OpenRewrite is a personal favorite of mine and I cannot talk about it enough. This is primarily used in helping coding agents make large scale write operations (refactors) across the codebase. Coding agents currently use a combination of shell commands such as grep, find, ls, and LSPs to make as accurate changes as possible.

OpenRewrite solves the problem of bulk writes via pre-defined curated rules - nothing beats OpenRewrite in executing large scale refactors. So instead of the agent coming up with refactoring logic from first-principles, it's just so much more efficient to prompt it to use OpenRewrite instead. This has particularly been useful in making large scale framework migrations such as Spring Boot and Java upgrades. Prompting the agent to plan, write, and execute OpenRewrite recipes for refactoring works much better than refactoring from scratch. Adding an OpenRewrite SKILL.md works great.

4. Future of SaaS: opportunities for developers to build

The above were some of the frameworks that I have integrated with my agentic workflows - though I feel that there's a lot more that can and will be done in this space. JAIPilot is my attempt in building an agent-friendly test harness framework.

This is purely speculative and I am not a fortune-teller. For agents to do well long term, building upon deterministic tools (as a standalone or as an AI integrant) is a necessity - both fit hand in glove. Though the models are continuously getting better, I do believe that without the use of rule guardrails (claude integrating LSP is a step in this direction), it would be difficult to build complex software. The question remains as to how the guardrails and agents work with each other. With the launch of cursor and claude marketplaces, using scripts and hooks solves this problem (and ofcourse MCPs) to an extent but there's still a lot of scope left for AI and rule based frameworks to seamlessly integrate.

Ready to Generate Better Tests?

Start using JAIPilot to create robust unit tests for your Java projects with AI-powered automation.

Get Started